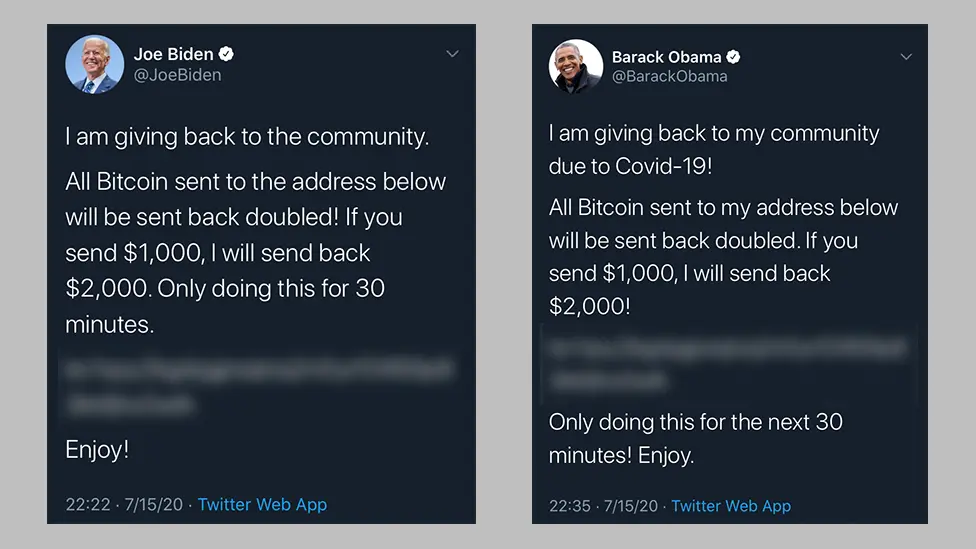

In July 2020, a coordinated attack compromised many prominent Twitter accounts belonging to public figures such as Barack Obama, Elon Musk, Bill Gates, and Joe Biden. The breach started with a series of phone-based social engineering operations. Attackers impersonated Twitter IT staff and contacted employees, and then persuaded them to log into a fake VPN portal. When the victims entered their credentials, the information was captured and reused immediately to access Twitter Internal Slack communications. Within a short period, the threat actors gained control of accounts that represented both major corporations and global leaders.

Social Engineering and Operational Exposure

After being covered by the New York Times, The Washington Post, The Financial Times and many other publications, an investigation by the New York State Department of Financial Services was initiated. The investigation determined that the attackers relied primarily on persuasion and impersonation rather than on any technical vulnerability. They researched Twitter employees with elevated privileges and contacted them directly, invoking routine operational issues to establish credibility. Once the attackers obtained credentials, they reused them in real time to access Twitter's internal tools.

From the original New York Times article:

Mr. O'Connor said other hackers had informed him that Kirk got access to the Twitter credentials when he found a way into Twitter’s internal Slack messaging channel and saw them posted there, along with a service that gave him access to the company’s servers. People investigating the case said that was consistent with what they had learned so far. A Twitter spokesman declined to comment, citing the active investigation.

https://www.nytimes.com/2020/07/17/technology/twitter-hackers-interview.html

The Expanding Market for Voice-Based Deception

The incident at Twitter anticipated a larger trend in cybersecurity. The use of realtime conversation as an entry point has developed into a commercial ecosystem of its own. Research groups have observed the emergence of services that provide synthetic voice creation, scripted dialogue flows, and real-time monitoring of ongoing calls. These systems reduce the expertise required to conduct a convincing impersonation. A small number of samples from a target’s public appearances can now be converted into a synthetic voice that closely replicates tone and cadence.

Studies of voice authentication systems indicate significant declines in security when presented with synthetic audio. Modest adjustments to speech rate or inflection can defeat common detection models. Experimental defenses such as watermarking and acoustic fingerprinting show potential but remain inconsistent. The asymmetry between offensive capability and defensive maturity continues to widen.

These developments extend beyond high-value corporate targets. Scammers have adopted the same tools to impersonate relatives, service providers, and government agencies. The mechanism remains identical: exploitation of trust through sound and familiarity. Humans are trained from early life to respond to tone and authority in voice; that instinct now operates against them.

Implications for Remote-first Authentication

The events at Twitter illustrate the limitations of security models that depend on perceived authenticity. Technical infrastructure can be reinforced indefinitely, yet the individual answering a phone call remains susceptible to influence. Defensive measures must therefore be restructured to remove human recognition as a basis for authorization.

Any request that involves password resets, access approvals, or the transfer of credentials must be verified independently through robust and secure identity systems. Credentials should never be recorded or exchanged through email, Slack or Teams, regardless of trust of encryption. Secure vaults and dedicated secrets management systems provide a controlled alternative.

Contextual authentication policies that consider device integrity, location, and behavioral norms add a further layer of control. For actions that present heightened risk, hardware-based authentication tokens remain the most reliable safeguard. Training continues to play an important role, though it should focus less on rote awareness and more on the institutional expectation of verification. Simulation exercises that include synthetic voice calls help normalize caution and procedural delay.

Some organizations have begun to redesign their communication workflows around these realities. Platforms such as Traceless integrate identity verification directly into service desks and chat systems, ensuring that credential resets and access approvals occur only through authenticated channels. By treating verification as part of the workflow rather than an added step, they reduce friction while closing the gap that social engineering continues to exploit.

The breach demonstrated that intrusion no longer depends primarily on technical failure. It depends on human predictability. In environments where communication itself can be fabricated, the design of trust must evolve. Security frameworks that treat every interaction as potentially adversarial are better aligned with current risk realities. Voice remains a powerful medium for connection, but within organizational systems it can no longer serve as proof of identity.

For the individuals behind these systems, this lesson is less about fear and more about adaptation. Every professional who answers a call, verifies a request, or approves an action participates in the daily negotiation of trust. The Twitter incident was a reminder that trust is not a weakness, but it must be structured, verified, and continuously renewed.

If your organization handles sensitive approvals or system access, those interactions are now prime targets for AI-driven impersonation. Traceless integrates with your existing tools in under 10 minutes, adding identity verification and ephemeral messaging that make these attacks significantly harder to pull off. Book a demo to see how it works.