The Confidence Game

Confidence schemes have existed as long as people have relied on trust. Ancient traders, street gamblers, and swindlers across centuries learned the same truth: it is easier to be welcomed in than to break through. By the 20th century, these tactics had become professionalized. Confidence men built entire operations around deceit, complete with offices, scripts, and accomplices. Their success came from reading behavior as closely as a map, from observing how people spoke, decided, and hesitated. They built credibility the way others built walls, layer by layer, until the target mistook manipulation for rapport.. They learned a person’s habits, language, and values, then mirrored them back until cooperation felt natural.

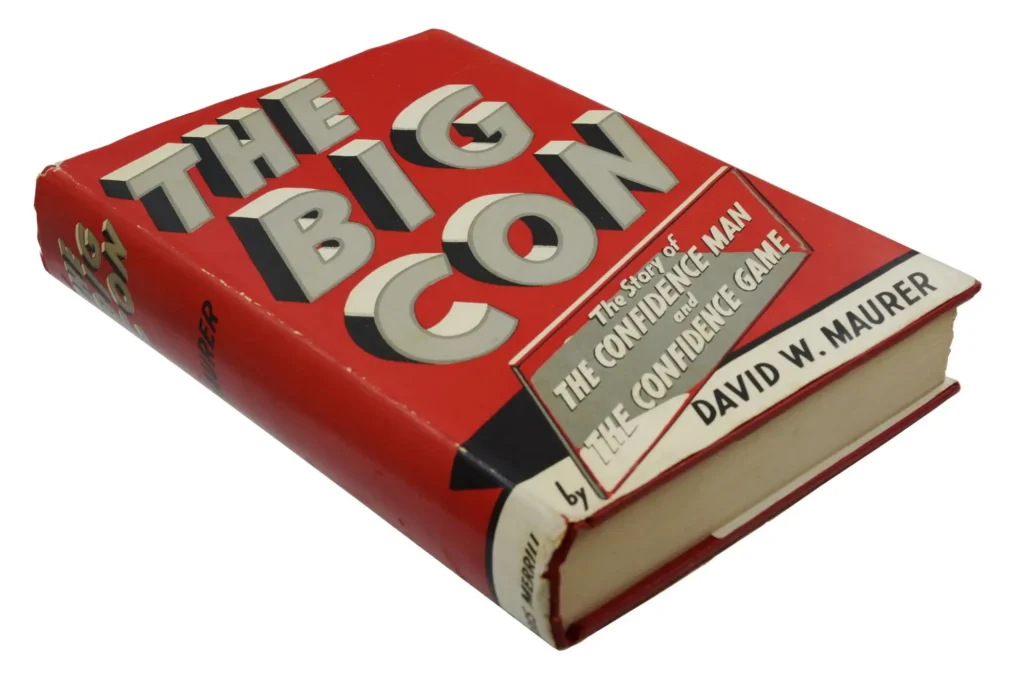

Linguist David Maurer captured this culture in The Big Con, published in 1940. Maurer was fascinated by language as a map of behavior. His research into the slang of American underworlds revealed a parallel system of human engineering, one that depended on rhythm, tone, and performance. He described how swindlers rehearsed every detail of their roles: how to dress, how to write a telegram, how to express just enough doubt to seem authentic. The victims were not gullible; they were convinced. The term “con man” itself comes from “confidence man”: someone who wins not by stealing trust but by earning it. When The Sting appeared decades later, with Paul Newman and Robert Redford recreating one of these elaborate scams, the public could see how deception functioned not as chaos but as choreography.

Cybersecurity inherited that choreography. Early breaches resembled burglaries. Attackers forced their way into systems through open ports and weak passwords. Defenders reacted with the same logic people use to protect a house: they reinforced the locks, added alarms, and watched the windows. Firewalls, encryption, and monitoring systems stood in for doors, deadbolts, and motion lights. The idea was to keep the intruder out. But the commonality between both is that there was as little contact between attacker and defender as possible.

Social engineering changes the premise. The Big Con means you don't avoid the mark, you make them your friend! The new threat does not pick the lock; it asks to be let in. Phishing emails, fake support calls, and cloned login portals imitate ordinary behavior so convincingly that the victim opens the door willingly. The modern attack begins with trust and ends with access. It is the same confidence game that Maurer documented; only the medium has changed.

Industrialized Trust

Once deception entered digital systems, it scaled exponentially. Automation made it possible to target thousands of people with messages that appeared individualized. Public directories, leaked data, and social media profiles provided enough context for each attack to sound personal. The craft of persuasion became a process. A single actor could simulate entire departments, each message crafted to mirror the style and timing of legitimate communication. In this environment, the distinction between technical breach and psychological manipulation blurred.

These attacks succeed because they exploit the same mechanics that sustained the cons of the 20th century. They rely on rhythm, context, and credibility. Where the classic grifter learned to read facial cues, the modern one studies metadata.

Artificial intelligence pushed this development further. Modern language models produce grammatically perfect messages in corporate tone, while synthetic audio can reproduce voices with convincing accuracy. In 2019, a director at the U.K. subsidiary of the German energy company Innogy SE received a call from what seemed to be his CEO. The synthetic voice, which was likely incredibly convincing, later confirmed by Europol to have been generated with AI, instructed him to transfer €220,000 to a supplier in Hungary. The transfer was completed before the deception was discovered. Reported by The Wall Street Journal, the case became an early example of how manufactured identity can override human caution.

These attacks succeed because they exploit the same mechanics that sustained the cons of the 20th century. They rely on rhythm, context, and credibility. Where the classic grifter learned to read facial cues, the modern one studies metadata. Heck, you can probably learn more about your mark online thanks to social media, than anyone could back in Maurer's day! The method has evolved; the psychology has not.

Reconstructing Proof

Training and awareness programs still matter, but they cannot bear the full weight of defense. The future of protection lies in designing systems that verify identity as part of their function. Verification must occur at the moment of interaction, inside the same platforms where the work takes place. The goal is not to teach people to distrust but to make trust verifiable.

Traceless aligns with this philosophy by embedding identity verification directly into operational tools such as service desks, chat platforms, and identity systems. Each approval or credential exchange is tied to a confirmed human identity. Sensitive data travels through short-lived channels that expire once retrieved, removing the static information that attackers exploit. Verification is documented, while the message itself disappears. The effect is structural: security becomes a function of process, not memory.

The evolution of social engineering reveals a consistent truth. Deception adapts, but so can verification. By treating identity as something demonstrated rather than assumed, organizations can close the gap that confidence artists have exploited for centuries. The con still depends on belief; the defense depends on proof.

If your organization handles sensitive approvals or system access, those interactions are now prime targets for AI-driven impersonation. Traceless integrates with your existing tools in under 10 minutes, adding identity verification and ephemeral messaging that make these attacks significantly harder to pull off. Book a demo to see how it works.